AI & Law Stuff

#9 Vibe-coding, vibe-lawyering, and vibe-legislating

Ready to vibe-law ? (1)

Lawyers do not spring forth from the thigh of the Leviathan to help you out with your leases and disputes: they are created, through a specific process that they like to think make them special (law school), credentialled in ways that offer them some powers and perks (as we saw), and, perhaps even more importantly, often equipped with a full apparatus of tools and techniques to practice their craft.

Some of these tools are of the mundane type: (most) lawyers are humans, and presumably benefit from productivity tools such as emails, automated word processing, cloud servers, and other SaaS of that kind. Others are more specific, targeted at the legal profession qua legal profession: this is the realm of the legal editors, legal-tech startups, and other gear designed for lawyers and jurists everywhere.

While often taken for granted, the latter tools are a critical component of the legal process: law, as we saw last time, requires gathering context, and some part of that context sits in proprietary database, or might be challenging to locate without help from some kind of technical apparatus. While in theory you could practice solely out of your printed version of the Code civil (which is a tool in itself), using pen and paper (also tools !), modern practice often requires far more.

Hence the need for law-oriented tools, which have a lot going for them:

Proprietary data and scale, of course;

Pedagogical value: for junior lawyers, working within a structured legal tool can be a form of training in itself, a way to teach a certain discipline;

Sociological value: mastery of the dominant tool in a practice area is a form of professional capital, it makes you legible to peers, valuable to clients, and employable across firms; (not to mention the career opportunities); and

The certainty, once you master the tool, that your input goes to your output, and often a clear auditable trail.

But we could also list a number of limits of these tools in general:

They are rarely developed by lawyers themselves, or at least not primarily, but by engineers concerned with the median-use case;

They target some skills or needs that might not (entirely) be yours; and

They entail costs and accessibility issues, one of which is switching costs: people soon get accustomed to a particular tool and are loath parting with it.

Broadly, then, existing legal tools necessarily propose a one-size-fits-all approach that may leave a lot of efficiency on the table.

Anyhow, another AI post went viral recently, this time precisely on the topic of using Claude as a general-purpose tool instead of any of the dozens offerings in “LegalAI”, the Harvey, Legora, and the like. In “The Claude-Native Law Firm”, Zack Shapiro recounted how he could do much more with Anthropic’s Claude itself than through the LegalAI providers.1 In particular, he wrote:

I’ve created custom instruction files, called “skills,” that encode my analytical frameworks, my preferred formats, my voice, and my judgment about how specific types of legal work should be done. When I upload a contract for review, Claude doesn’t apply a generic framework. It doesn’t even apply my firm’s framework. It applies my framework, the one I’ve developed over a decade of practice, automatically. The difference between a firm playbook and an individual lawyer’s encoded judgment is the difference between giving someone a recipe and teaching them how to cook.

There is something here, and Shapiro himself completed this with a piece on “The Judgment Premium” that feeds into the whole literature we discussed recently about “taste”. His image of a lawyer exercising that judgment helped by a general-purpose AI assistant is appealing.

Still, Shapiro’s experience, while interesting, only goes so far: the Claude-native law firm, if it is to prosper, assums a lawyer who already knows what “good” looks like.2 It may also satisfy different clients than the ones that trust Big Law. While I have no reason or ground to challenge Shapiro’s contention that Claude allowed him and his firm to compete with larger law firms, there is a reason Big Law sometimes has so many layers of humans working on a product: call it some kind of “defence in depth” that, hopefully, prevents the worst blunders. Self-reliance is great, but it’s also often a vulnerability.

Moreover, looking at the question in terms of tools and uses opens up a different consideration, one where the “jagged frontier” is not the technologies or providers themselves, but with the lawyers themselves.

A simple divide can be made. On the one side are lawyers for whom, just like a lot of workers in general, the existing tools are to some extent constituve of their professional identity. Their work is embedded in institutions, and institutions run on shared tools. On the other side, one can find lawyers like Shapiro, for whom the one-size-fits-all approach leaves too much on the table, and represents someone else’s workflow imposed on their judgment.

Nothing prevented the latter from learning Python and build their own tools five years ago (I was teaching it!). What AI does is lower the threshold dramatically, making it far easier to start on that path and to build something that actually works, or seems to work.

But in doing so, it also makes the divide sharper: the first group’s approach become more visible as choices, not defaults, while the second group’s willingness to get their hands dirty becomes a more legible form of competitive edge. The profession has always had both types; what is new is that the tools now sort them.

Ready to vibe-law ? (2)

But fine, let’s talk about vibe-coding - or even coding - as a lawyer.

There is much going for it : professionals are the best-placed to know exactly what they need and what could ease their workflow; they are conscious of both the practical steps taken to do any legal task, and what needs a given task is meant to satiate. In other words, lawyers are Hayek’s “man on the spot” for legal practice.

Meanwhile, the costs of getting your hands dirty and build your own tools are sharply decreasing: you can vibe-code something that works, depending on what you are asking for (and your definition of works).

And so, increasingly, lawyers after lawyers are discovering that they can just do things (and, optionally, post on LinkedIn about it). Entire legal communities - such as LegalQuants - are growing around that realisation. “Vibe-code an app” is now part of what my law school students are graded on.

There are, of course, limits to this approach, be it in terms of reliability, vulnerability, scaleability, etc. As the developer of a legal tech app, I can testify that taking something from development to production is no easy feat: database management, server logs, etc. - there are dedicated professions for this, and believe me, it shows. And not to mention the AI fatigue, the difficulty of managing agents working too quickly for you to appreciate the work done (and misdone).

But if we take a step back, it might be worth looking at the underlying notion of automation in itself, because this is what is at stake: the impetus to build apps is often the willingness to delegate part of the work (the annoying part, hopefully) to a process that achieves an output to a satisfying level.

And the key part of automation - and what makes building agents difficult, is that you first need to know (i) what you want; and (ii) how to get there. Coding requires discrete, concrete steps, and forces us to reflect on what these steps are, a type of grammatisation of one’s practice. But not every task lend itself well to such explicit spelling-out - many, in fact, don’t, as they require something of us, some kind of appraisal or (again) judgment that cannot be put into words, or at least not fully.

Further limits of automation are also well-known, and for a long time. Lisanne Bainbridge’s “Ironies of automation” (1983) lists a few, including:

The Deskilling Effect: Automating routine tasks removes opportunities for humans to practice those skills. Optimizing for efficient output, potentially devalues the internal changes and deep understanding gained through the process of human effort and struggle.

The Monitoring Trap: When automation works, the human monitors passively. When it fails, the now-deskilled human must suddenly intervene in a complex, unfamiliar situation. The “easy” stuff is gone, leaving only the hard exceptions.

Of course, that could be said of any technology. But the deeper irony of Bainbridge is this: the people most qualified to automate a task are those who have mastered it the hard way. By removing the drudge work that was the training, fewer such people might exist in the future.

In other words, lawyers are now building tools that presuppose expertise we are simultaneously making harder to acquire. This is the needle to thread, and the hard question ahead: automating enough to stay competitive, while preserving enough friction to keep producing lawyers who know what they are doing and can assess whether that automation is worth anything.

AI laws are coming for you

A few weeks ago we discussed how creating norms is one of the most common reflexes of our times in front of any new phenomenon, regardless of how well the phenomenon is understood. Something needs to be done, so let’s have a law about it, the reasoning goes - and we might as well call it “vibe-legislating”.

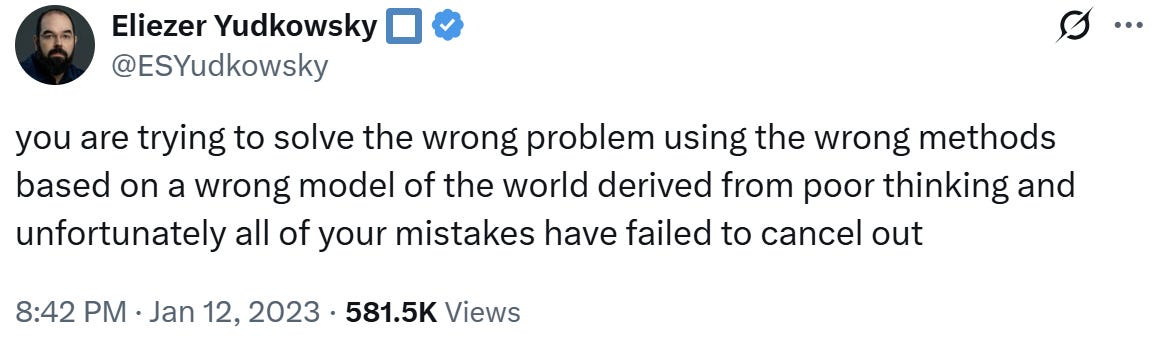

The problem is that it often misses its target or create far more problems than expected. Or, to cite a classic:

The latest offender in this respect is NY State Senate Bill S7263, which, as per its explainer, would:

prohibit[] proprietors of A.I. chatbots from permitting the chatbot to give substantive responses, information, or advice or take any action which, if taken by a natural person, would constitute unauthorized practice or unauthorized use of a professional title as a crime in relation to professions whose licensure is governed the education law and judiciary law. This bill ensures professional advice is provided only by licensed human professionals and not by artificial intelligence or chatbots.

While the statement of reasons relies mostly on the experience of therapists, the Bill as drafted would explicitly extend to legal advice provided by AI, insofar as it breaches the local provisions reserving the practice of law to licensed attorneys.

Now, I am no expert in New York law but I expect that, as in most jurisdictions, an uneasy compromise has been found between this prohibition and the fact that many, many people “practice law” in some respects without being licensed attorneys, be they corporate counsels, bureaucrats, or your neighbour advising you on the lease you are about to sign. The key question is whether this compromise will extend to the advice provided by a LLM.

But assuming a maximalist position on this issue, then it’s hard to see what problem this bill solves. Certainly, there may be an issue of AI providing wrong answers to legal questions : there are, so far, 26 pro se litigants in the AI Hallucinations Cases database for the state of New York. But barring them from using a chatbot will not suddenly direct them toward an attorney: they are pro se for a reason ! Besides, attorneys themselves account for nearly as many New York entries in the database (20), which rather undermines the premise that licensed attorneys are more deserving of trust in using AI.

More fundamentally, the bill says it “ensures professional advice is provided only by licensed human professionals.” However, as has been noted, the alternative to AI advice is often no advice at all, for the people who can least afford it. Surely that can’t be a good idea.

It’s not the first to make that point (Jordan Bryan made it three months ago, and went further into the drivers of LegalAI), and the idea that general-purpose models beat over-engineered, specific-purpose tools and approaches is also frequently making the rounds.

Also, at some point Shapiro says that “Knowledge that takes years of mentorship to transmit is now an instruction file that works from the first draft.” With respect, I doubt anything that truly takes years to convey can fit in an instruction file, or can even be put into words to begin with.