AI & Law Stuff

#6 Legal privilege, yet more AI hype, and reports from the future of work

The judge can read your chat

Sometimes you need to share with your lawyer things you would prefer other people not be able to know or discover. Maybe you are about to do a thing, and you are not totally sure if that thing is legal, and you would like your lawyer’s opinion on that, but you would not like to create a written record that says “hey, is this thing legal?” that a prosecutor can later wave around in front of a jury. If you cannot do this - obtain consequence-free legal advice -, maybe you’ll do more illegal things, or maybe, when caught, you won’t be able to benefit from your rights to the fullest extent.

This is why we invented legal privilege. The basic deal is that you can talk to your lawyer candidly, and those communications are protected. Nobody gets to see them: not the other side in a lawsuit, not the government, not a regulator, nobody. This helps you, but at another level, it also helps everyone: better legal advice, fewer illegal actions, broader use of the law. And thus, while the entire rest of the legal system is built around the idea that courts should have access to all relevant evidence so they can figure out what actually happened, privilege is the big exception: some conversations are so important to the functioning of the legal system that the legal system itself agrees to be blind to them.

So that’s one way to think about privilege: privilege exists for you, the client. You are the one who needs to be able to talk candidly to your lawyer, you would be harmed if those communications were discoverable. On this view, privilege is a purely functional thing - a tool that makes the legal system work better by encouraging people to be honest with their lawyers. The lawyer is almost incidental; the lawyer is just the person you happen to be talking to.

But there is another way to think about it, as something closer to a sacred attribute of the legal profession. Lawyers are special: they are officers of the court, and have ethical obligations and duties of confidentiality that exist independent of any particular client’s preferences. Privilege, on this view, is part of what makes lawyers special, as a power that attaches to the lawyer’s role in the system, not just a convenience that attaches to the client’s needs. The lawyer is a kind of priest, and the communication is a kind of confession, and the sanctity of that relationship is something the legal system has a deep institutional interest in protecting for its own sake.

This distinction matters when we reason about the boundaries of privilege, and how to delineate them. In fact, the focus on lawyers likely stems in part from the need to draw a strict line on what is privileged or not - hence the notion of “attorney-client privilege”; but it has also taken a life of its own.

I am not saying that one of these views is right and the other is wrong, but it is useful to know which one someone is working from when they start making arguments about whether a particular document is privileged, because it will tell you a lot about where they’re going to end up.

Anyhow, in a recent decision (reported via) on a motion for discovery (here), a judge at the SDNY refused to apply privilege to conversations between a defendant and an LLM.

While the judge reasoned from the bench [Updated February 18, 2026: a full reasoned decision is now available here], the prosecution’s motion is suggestive of the kind of arguments that could lead to that solution.* For instance:

The attorney-client privilege reflects a policy balance that requires the presence and involvement of licensed attorneys. The AI tool that the defendant used has no law degree and is not a member of the bar. It owes no duties of loyalty and confidentiality to its users. It owes no professional duties to courts, regulatory bodies, and professional organizations. The policy balance embodied by the attorney-client privilege cannot be mapped onto a machine that provides what may resemble legal advice.

This is the second view described above, and is likely a fair position under existing American law on privilege.1 But my point is that, if one accepts the other view - privilege is functional, it benefits the client - then some of the arguments made here sound distinctly weaker. Consider this point:

The AI tool is obviously not an attorney. And, outside of certain narrow exceptions not relevant here […], the attorney-client privilege does not attach to non-attorney communications. The defendant’s use of the AI tool here is no different than if he had asked friends for their input on his legal situation.

Well, I love my friends, but none of them is currently able to pass nearly all available bar exams (they struggled enough getting one or two), and they do not possess the trillions of tokens of latent legal knowledge as your run-of-the mill LLMs. And whether AI’s legal advice is “good” or accurate is beyond the point (human lawyers err too): the fact remains that people are using LLMs as lawyers because they trust them to give them outputs nearly as good as lawyers (without the cost).

And while this point was relegated to a footnote, I think it is crucial for the argument I am making here:

The AI Documents are unlike a client’s confidential notes, which may be privileged if they (1) memorialize privileged conversations with an attorney or (2) organize a client’s thoughts for communication to an attorney and the substance of the notes are actually communicated to an attorney. […] Here, the AI Documents are non-confidential communications with a non-attorney AI software. Only after this AI analysis was complete did the defendant share the AI output with his attorneys.

Yet, for better or worse, this is now how people brainstorm and take notes on how they will want their legal counsel to be, and what it should focus on. The court’s framing makes sense in a world where the client is relatively passive and exists mainly to receive legal wisdom from a credentialed attorney; but if you accept that clients participate more actively in their own defense, and that the tools they use to prepare for those conversations are part of the process of obtaining legal advice, then there is a reasonable argument that those conversations should be protected too.

None of this means the judge (or for that matter, the prosecutor) got it wrong. Under existing law, the answer is probably pretty clear: no attorney, no attorney-client privilege. But the interesting question is whether existing law has the right framework for a world in which the thing giving you legal advice is not a person, not a friend, not a book, but something that is - in terms of the quality and specificity of the advice - genuinely closer to a lawyer than to anything else we have had before.

AI hype and its uses

Two weeks ago, I described my reading of the discourse for the past few months/years in the field of AI for coding purposes, pointing in particular to a dismaying polarisation:

between the hype-mongers (“[insert just-released new model] built me three different apps in a single hour, reorganised my mail folder, and fixed my marriage”) and the rational, down-to-earth types admitting to some interest in agentic/automated coding, but with a tepidness meant to display a “I am not fooled” attitude.

This dichotomy, needless to say, is even more exacerbated within the general discourse about AI, especially when it comes to its usefulness and its potential to shake many existing institutions and professions. The hype-mongers bellow even stronger that AI will change everything, while the naysayers - often, but not always, drab academic types - fixated on limits and issues that have long been overcome or proven irrelevant.

Yet, the hype just received a new influx with recent release of two new, truly impressive models from Anthropic (Opus 4.6) and OpenAI (GPT-5.3-Codex). In this respect, a certain article on X (formerly Twitter), entitled Something Big is Happening was recently shared by many, including accounts I otherwise trust for their common sense. The author, Matt Shumer, took his (mostly AI) pen to herald a new era where the models have become so competent that our jobs are on the line if we don’t adapt, and fast.

Leaving aside certains things better fit for a footnote,2 there are several things to take from this piece.

First, forgive me for the Gell-Mann effect, which was quite strong when reading things such as:

Legal work. AI can already read contracts, summarize case law, draft briefs, and do legal research at a level that rivals junior associates.

We have already discussed how the legal profession might be more complicated (and indeed, rich) than this caricature of what a lawyer does. Besides, while I am ready to believe that the newer models are even better at all this,3 the previous models had already made inroads in this respect - without (so far, certainly) shaking the legal profession.

Second, the essay makes a number of valid claims - one being that a lot of people have a false appreciation of the value of AI models, based on them trying the free version of ChatGPT two years ago. In fact, to the extent Shumer’s article was pointing to the gap between public perception and reality, it hits on something real and under-appreciated.

This is why, third, there is a possible way out of the dichotomy described above between the hype-mongers and the naysayers. One that recognises that we have been here before (in terms of exaggerated levels of hype), but that things are sticky, and technology adoption is hard and sometimes requires nothing else but generational change. And even if the claims about the current models’ ability were accurate (which I am ready to believe), this means only one thing: that there is a growing gap between the best technology can do, and what people choose to settle with instead.

But this gap has been with us forever: famously, Germans still use fax machines in many different applications (and last year saw some reports, probably not serious, that you can use these machines to query ChatGPT now - progress of sorts). Or consider the US banking system, which still relies on COBOL mainframes from the 1970s because the risk of rewriting the code outweighs the efficiency of modern languages.

Now, I am much more interested - and I have hardly seen any good analysis - as to what happens when that gap widens, as it is bound to ? Does the distance between what is possible and what is adopted eventually become insurmountable, calcifying institutions around legacy tools ? Or does a wider gap increase the payoff to whoever finally bridges it, creating winner-take-all dynamics ? If the latter, than people are right to stress the importance of agency, and to point out that you can do much more with AI.

And so, this is where I part ways with Shumer’s conclusion that everyone must adapt because AI is coming for them. The framing is backwards: the reason to close the gap is not fear of obsolescence - it is that the gap itself is where the interesting work now lives.4

The robots are coming (still) (again)

Still, in a world where Anthropic’s going into legal tech has key legal stocks stumbling, it is legitimate and healthy that people wonder constantly about their jobs. But the narrative - AI automates tasks, workers become redundant, adapt or die - is not just likely wrong; it’s also uninteresting. A few recent pieces suggest a richer picture.

The first is a (well-shared, likely because it hit a chord) study by the Harvard Business Review based on interviews, that claims that “AI does not reduce work, it intensifies it”.

The authors find that this intensification takes shape through three principal vectors:

Task expansion, including workers dabbling in areas where they used not to bring any input, simply because AI allows them to do so at (what they believe anyway) is an adequate level of skill. The key example here is coding, because that’s what models are best at, but it’s certainly true that some, let’s say, “softer” jobs (like marketing, communication) might be easily undercut by the text-generation machine.

Blurred boundary between work and non-work, because AI can be launched to work on or brainstorm on any new idea one has, at any given time. This is interesting, but I doubt it’s that much of a change from many jobs that already, to a large extent, require you to do the brainstorming at any given moment of your waking life.

More multi-tasking, in light - for many - of the perfect partner AI can be for many projects where one person does not suffice. At the same time, as rightly put by this tweet:

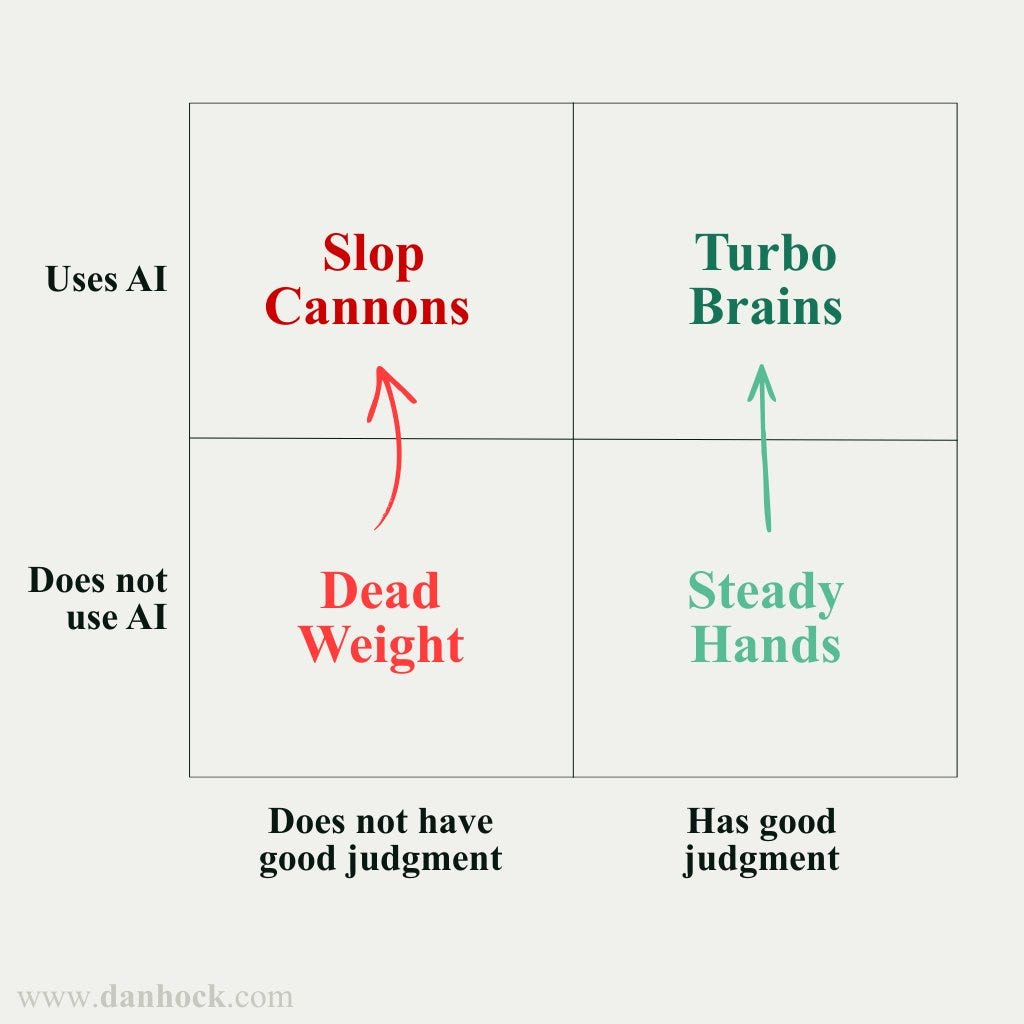

According to the report, this has two types of costs, personal (more decision fatigue, possible burnouts, difficulty to prioritise) and systemic (more projects, conflicts between the AI-enhanced dilettantes and the experts). But I guess an easier way to describe all this was offered by this rather crude 2x2 matrix on Twitter:

Ultimately, a lot of this comes back to judgment a theme we already covered in the past and should deserve a dedicated piece at some point.

As well, three additional tidbits to take into consideration:

Cross-tasks productivity: as described and explained by Philip Trammell (here, via), when your job is composed of several tasks, the degree to which they can be automated to reduce workloads depends on the interaction between these tasks. While Philip offers the example of coding/debugging, the situation is likely very similar in the legal field: time spent doing the legal research is time saved when checking whether the argument makes sense: you checked the argument while writing/researching it ! (Same point made here.) And thus, even if AI one-shots a legal output, you may not necessarily gain time if you want to be sure it’s checked and verified to the level your own output would be.

Tacit knowledge: When I teach about agent and automation, the most important thing I try to convey is that the latter is feasible only to the extent that a given task can be decomposed in clear, explicit steps. But a lot of tasks are not, because they rely on tacit knowledge or, as Chris Walker puts it (here), “unreflective” knowledge, stuff that cannot be decomposed in clear explicit steps. And then, there is much to take in Walker’s prediction that as AI takes away the drudge, more work will be dedicated to what rely on that knowledge, giving ever more importance to the humans that possess it.

Human touch: On his blog, Adam Ozimek recently gave convincing examples of the “constant, unwavering demand for the human touch”, and suggested that it could be a normal good - i.e., one that grows in demand as incomes improve. This is why, e.g., “in almost every town in the United States, the very night you are reading this sentence, terrible bands are being paid to perform live in bars” - despite the ubiquity of free (and excellent) musical performance. As we described last week, the legal profession to some extent likely participates in this human touch economy, and it’s possible this aspect will matter increasingly.

And so, the simpler story attached to AI hype (adapt-or-die) is not only (likely) false, it is also boring. AI will certainly bring changes to jobs and professions, but it will do so in very interesting ways, and one should anticipate these - not with dread or the stress, but hopefully with eagerness and an ability to appreciate the changes that are coming. * d

* [Correction February 14, 2026: this part was amended to clarify that the quotes stem from the motion, not the decision itself].

One fascinating point in the analysis, however, is the judge’s reliance on Claude’s Constitution and its disclaimer that Claude does not offer legal advice to conclude that this was, indeed, not legal advice worth protecting.

The “written by ChatGPT” feel, to begin with, but also the, let us say, broader credibility concerns regarding the author.

I try new models mostly on coding tasks, rarely on legal inputs.

See also this piece which makes the same kind of apocalyptic predictions and lands on calls to change your entire approach to work, but has also a lot of insights about AI revealing some blind spots of the existing systems (e.g., people whose “strategic” value was just thoroughness, or the ways the promotion system does not necessarily reward the best workers).

On the legal privilege issue, small correction -- you are quoting from the prosecution's broadly drafted motion, not the Court. The judge rule from the bench and didn't say much (though we he did say wasn't great -- now same as a well considered written opinion though). If interested, there is some good discussion following my LinkedIn post linked below. Really love your hallucination tracking project too! (Used it as a jumping off point on why lawyers should use LLMs for legal research anyway.... sometimes, and carefully).

https://www.linkedin.com/posts/activity-7427218654511173632-0vJM?utm_source=share&utm_medium=member_desktop&rcm=ACoAABW5pB0BRDTgxtNoNOPeS2-G194yZ_rLSb4

Not sure if you saw the comments, but here more for the Gell-Mann amnesia bit. Someone asked Schumer about AI legal hallucinations and his response was “Use the most recent models (try GPT-5.2 Pro in ChatGPT, GPT-5.3-Codex in Codex, Opus 4.5 in Claude) and you won't have this issue.” Hard to imagine someone is that unaware of the limits of LLMs.

https://x.com/mattshumer_/status/2021369465284468955?s=46&t=g0YG1OIX7LFM0CY5ZK_Plg