AI & Law Stuff

#5 Hallucination squatting and/as the future of the legal profession

The goals of a legal education

Sometimes, people will do things that are against the law, or could be construed as such. Society has designed an entire machinery to handle this scenario, at the level of courts and the judiciary, but also in all the roles designed to avoid it in the first place, through legal advice, compliance, etc. Although the optimal amount of fraud may not be zero, law, and the desire not to breach it nonetheless serves as a sort of reference point, around which an industry, a profession, an ethos are built.

So when a breach happens and a person is in legal hot water, this typically is taken as bad news. Paperwork has to be filled and answered, and lawyers come in to take care of that and the aftermath. But there is also, often, a person there to be reassured, patted on the back, taken care of, someone who needs to be told that all will be fine because they did not breach the law, or maybe not that badly.1

This is one of the role of the lawyers (though certainly not the only one), and needs to be kept in mind when reading the evergreen reports of the demise of the legal profession at the hand of AI. These reports often stem from the same assumption that lawyers are here mostly, if not entirely, to produce content, in the form of legal advice, presumably through a written medium, and it is equally evergreen to point out (as I just did) that this assumption has its limits.

Still, the hot takes about the future of lawyers are understandable. Seeing AI in action (or pass the bar exam) can quickly lead one to that conclusion: at first glance, the output looks and feels like something lawyerly, and it took seconds to produce, so they must be cooked.2 It does not help that many are primed to respect legal documents qua legal documents, thanks to the authority of style. (This is well-understood by the excellent “Art Project About Lawyer Vibes”, a “free, online, and open-source tool that lets you give any complaint you have extremely law-firm-looking formatting and letterhead.”)

On the other hand, lawyers are still there, being hired, and working hard, and it is famously hard to predict the future. Economics are no particular help here, given that the legal market is far from being an efficient market to begin with; in particular, it remains to be seen whether a Jevons effect will save lawyers from doom.

All this creates a certain ambivalence about the future of the legal market, which was well expressed in a recent New York Times article aptly titled Interest in Law School Is Surging. A.I. Makes the Payoff Less Certain. In particular:

So far, more efficient grunt work hasn’t stopped firms from hiring new lawyers: Law students who graduated in 2024 had the highest employment rate ever, according to the National Association for Law Placement. More than 90 percent found jobs.

Things could get dicier: The association also reported that law firms had hired fewer summer associates in 2024 and 2025, which it said suggested “that there will be fewer graduates employed by large firms over the next few years.”

Testy said that it was possible A.I. could shrink job openings, but that it was also possible it could expand what lawyers do. “It could be used to streamline small disputes in court, for example,” she said.

But the key insight in this piece stands in the conclusion, which offers a key to approach these debates:

Cooper has applied to five law schools, after carefully checking into how to afford the cost of the degree.

“I factored so many things in, even looking at projected salaries for starting lawyers,” she said. But A.I. wasn’t part of her calculations. Instead, she’s banking on the more timeless appeal of a legal education.

“I feel like law is one area where you can see how society really runs,” she said.

Whatever happens to the supply of law (thanks to AI-assisted lawyers, or just AI by itself), the demand is unlikely to ever subside, because, if anything, law (of whatever quality) is ever more present in our day-to-day life - for better or worse.

The AI will answer you now

When looking at the actual “legal knowledge” part of a lawyer’s job, a large part of it can be described as different ways for legal information to flow between different actors, so as to create an understanding (and sometimes create legal situations out of that understanding) of one’s legal position.

It would not be an exaggeration then that a key skill for a jurist is in her ability to do legal research, to identify the information needed in a given moment, discard what’s irrelevant or unneeded, and then to package it in ways that will please and/or help her client. In other words, many lawyers are in the business (again, among other things) of giving answers to (legal) queries, so as to act upon this answer.

When using that lens, many other professions are in that business ! And this may include, for the sake of argument, developers and engineers, who are typically asked to provide their expert knowledge in answers to questions to act upon these answers (by, e.g., producing a software).

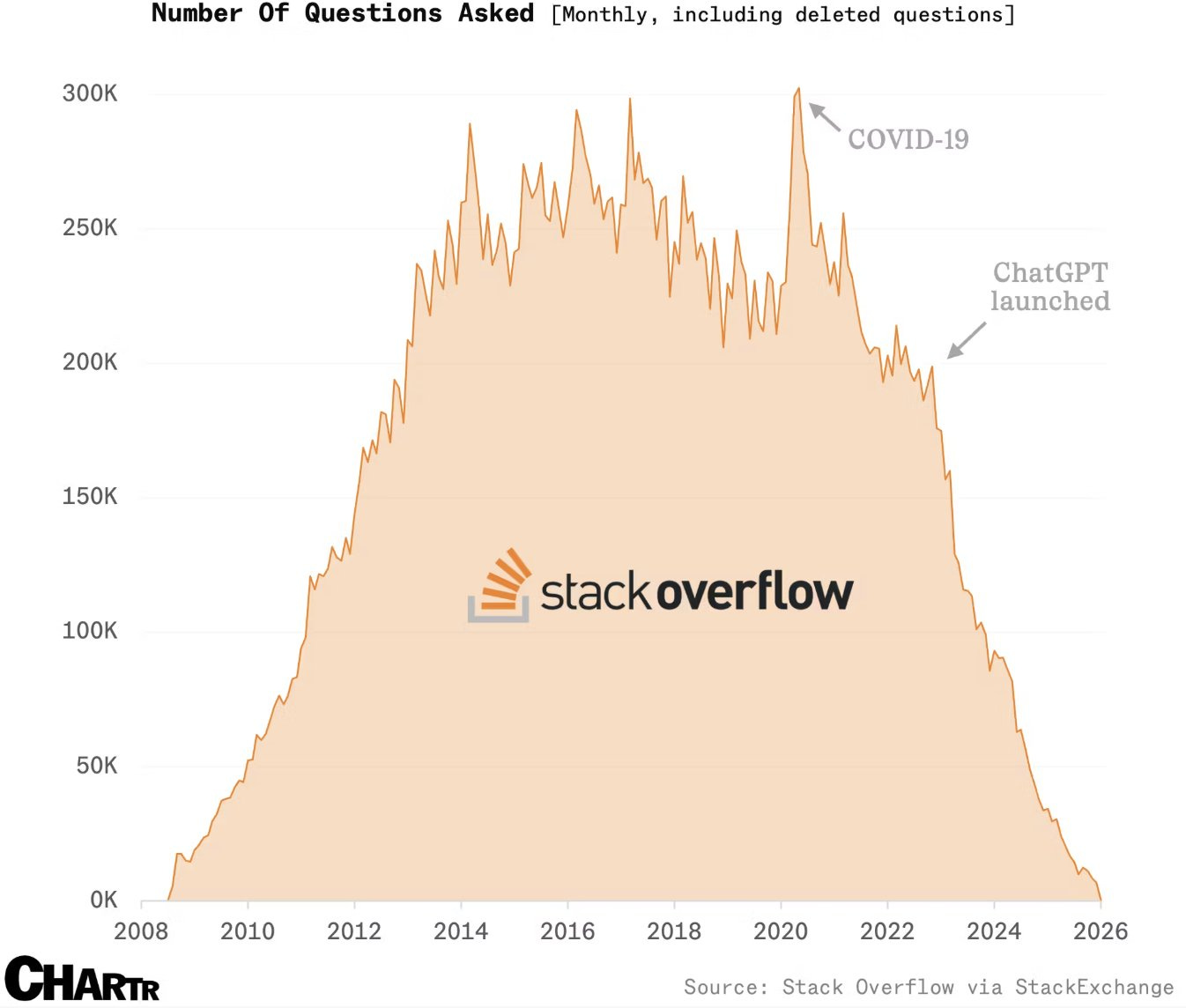

In this respect, asking questions and receiving answers from developers will ring a bell for anyone who learned coding in the pre-ChatGPT times: Stackoverflow, a forum where people ask coding and programming questions.3 But if this holds any lessons for the future of lawyers as an answer-providing profession, this lesson might be grim indeed. Here is a chart.4

Now, of course, the fact that developers stopped learning from each other through StackOverflow does not mean they disappeared (even though the same employment worries are very much aired in this field). But the point is more to offer yet another data point supporting the potential of AI as an answer-providing actor. This colours the politics over whether popular LLMs should even authorised to answer legal questions.

Be that as it may, this parallel is also interesting to surface key distinctions between code and law, and thus between coding answers and legal answers:

Code, at some point, has to work - which offers a quick way to verify it.5 By contrast, law can offer several valid answers to a single query, and the verification mechanism (e.g., a court judgment, a new law) are not readily available.

Relatedly, coding answers are, if we ignore versioning and upgrades, always true given a certain technological stack: the same function would take the same arguments and yield the same type of output. Legal answers can be relative, depending on what other actors in the loop are doing or alleging.

Code does not have to take the expectations of the asker in consideration, nor does the asker expects their feelings to be part of the input: everyone involved just wants something that works and meets the specifications. Whereas a legal answer may well depend (at least if a lawyer is worth her salt) on the particular client in need of that answer.

Code is not being written or deployed against an opponent actively trying to make it fail. Whereas legal answers exist in an adversarial system where the other side is paid to find weaknesses in your position, which changes what counts as a "good" answer: defensibility matters as much as correctness

Yet, another thing bears mentioning: developers did not abandon Stack Overflow because the community became toxic, or the answers proved bad; they left because they found a way to get answers without the friction of waiting and having to parse someone else’s thoughts and ideas. In doing so, they moved partly from deliberation to direct consumption of legal outputs - and that might be another way to look at the future of the legal profession.

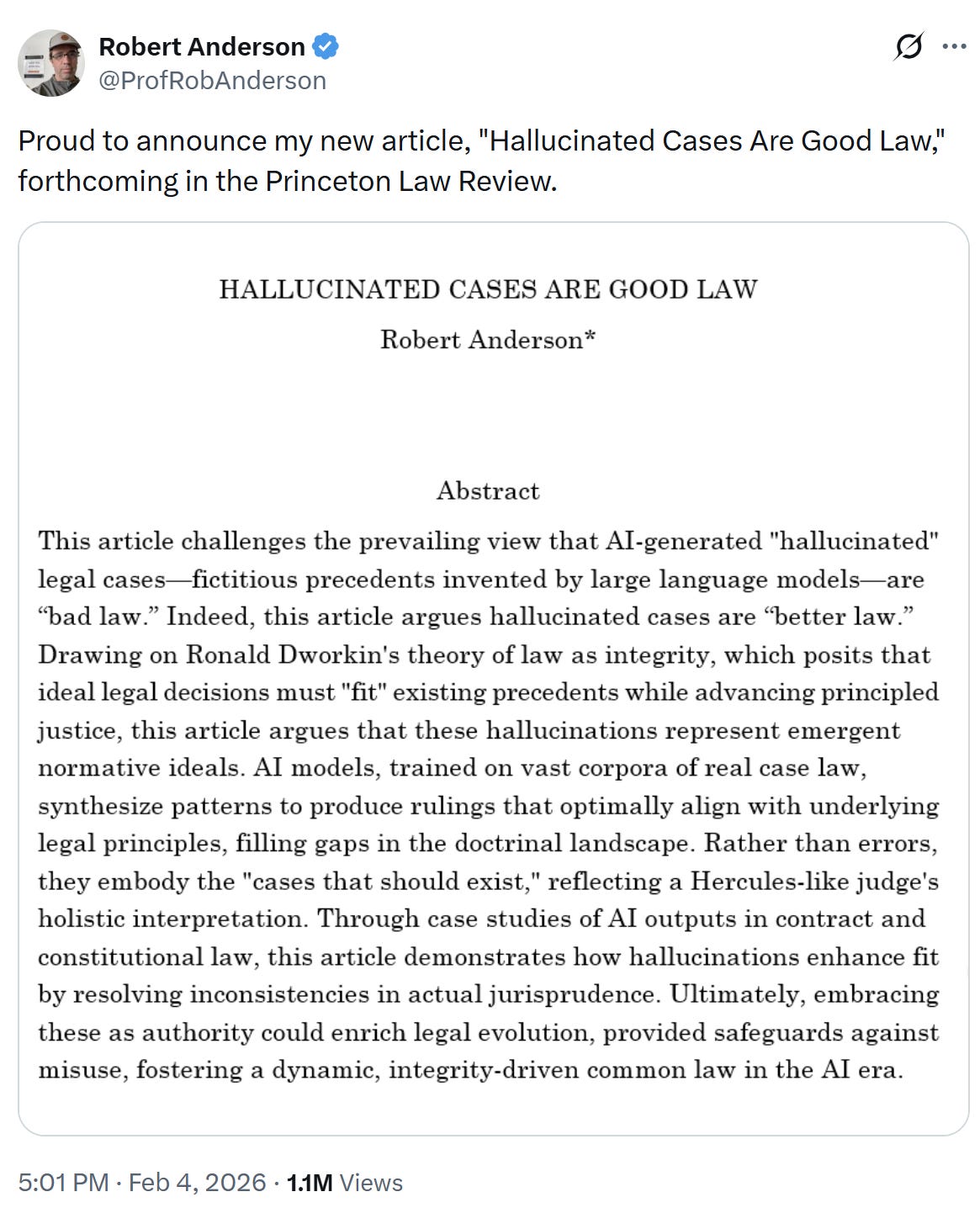

Hallucinated case law is the best case law

When explaining why LLMs hallucinate legal sources, one key variable is the models’ post training, which tweak them to become good and helpful assistants - the kind of assistant that would do anything to help you out. This often explains why one may find hallucinations backing the key legal allegation of a particular lawsuit or defence: the harder a legal case is to make (or: the more out-of-distribution it is), the likelier the chance of the model outputting hallucinated material.

And so, in some respects the hallucinated material is the often best material that can exist to support one’s case, with no need to stretch the analogy or spend efforts arguing that (hallucinated) norm X should apply to situation Y - often, AI provides one with an open-and-shut case, which is the best kind of case (if this favours you). This is also what helps detecting hallucinations, because the other side, or the court, would rightly be suspicious of such a great precedent in your favour.

Anyhow, someone draw the most important conclusion from this, which is:

Now, this is obviously an (excellent) joke, as many have noted, be it only because Princeton Law Review itself would count as an hallucination.

But it might be worth going further than that, and take the argument seriously to some extent. And compare it, for instance, from Steve Yegge describing the coding that went into Beads, a recent package meant for AI agents (H/T Simon Willison):

What I did was make their hallucinations real, over and over, by implementing whatever I saw the agents trying to do with Beads, until nearly every guess by an agent is now correct. I’ve driven the friction cost term about as low as it can go. […]

I actually got this idea from hallucination squatting, which Brendan Hopper told me about, where you reverse engineer a domain name that LLMs are hallucinating, register it, upload compromised artifacts, and the LLM downloads them the first time it hallucinates the incorrect site name.

Likewise, I am waiting for an underworked legal clinic to take frequent patterns of hallucinated material (I have the list if needed), find or incorporate parties with the proper names, and make sure the case names actually exist.

But more profoundly, if my thesis about citations ever taught me everything (which is doubtful), it is that a lot of cited material does not necessarily fully correspond to whatever that material initially said. In fact, many citations serve as a signal of what people believe an authority says, which is just as well.

In other words, the argument from authority works insofar we (all, collectively, if unconsciously) agree that something (i) is an authority and (ii) denote a particular argument - and the latter may not perfectly match the underlying material’s content. A collective hallucination, if you will.

What I have been reading

The adolescence of technology. Why we should talk about zombie reasoning for LLMs. School is way worse for kids than social media. This book review of Philosophy Between the Lines. On heritability. This gorgeous website about aging.

As a white-collar criminal lawyer friend once told me, maybe with a hint of exaggeration, a large chunk of his annual income is predicated on the one moment when, in June in a lodge at the Roland-Garros tennis tournament, his client turns towards him and ask “Maître, will I be all right” ?

Note that this is especially (or mainly ?) true of anglo-american legal systems; ask GPT-5 for a legal output outside of its training data, say, in Croatian law, and it would not output great work, or anything recognisable as competent by local lawyers. Incidentally, I think this is part of what explains the discrepancy in case numbers between various jurisdictions in the Hallucination database.

As lore had it, the best way to get a correct answer on Stackoverflow was to first offer a wrong answer with a sock-puppet account, and then wait for people to come and correct it.

It appears this graph exaggerated a tad the more recent numbers, which are not yet “0” - you can go on the website and see for yourself - but the 95+% drop is matched by other data.

Of course, beyond that you may want it to a run well, but this is a distinct question of optimisation.

There is so much depth here. The discussion of human judgement within the framework of law (Petri nets) and deterministic vs predictive computer system output. If it were possible to program legal reasoning, we would have accomplished it with Eisenhower era tech.

I particularly love the subject of your dissertation, "But more profoundly, if my thesis about citations ever taught me everything (which is doubtful), it is that a lot of cited material does not necessarily fully correspond to whatever that material initially said." Jevons, who you gave an excellent citation to, is currently the rallying cry of tech bros who are fighting regulation with the claims that the market will magically solve all problems. None of them understand that Jevons supported the exact opposite policy conclusion.

Well done sir.