Law & AI Stuff

#13 AI-writing stigma, AI-native lawyers, and legibility

Did an AI write this ?

It’s a common theme here that lawyers do more than just provide, create, or even format legal arguments through a text medium. But they do a lot of that too, and much of the profession lives and actuates itself through the act of writing. It is, after all, a profession that attracts the kind of people who have opinions about Proust. To wit: despite law schools typically including courses on “legal writing”, if you talk to senior lawyers, they’ll often deplore that the young can’t write a proper sentence.1

This is taking a different tenor now that we have machines that write cheaply and quickly any given text we can imagine, often with better style (or at least, more proper syntax) than humans. And some of the past and current upheavals in the AI & Law spheres come down, as I have tried to catalogue, to the identity of the “author”.

Which is why it’s good to check how other professions engage with a present where text has become cheap. Last week, part of the discourse focused, at last, on how journalists write with AI. Two stories encapsulated, I think, two different ways of looking at this.

First, the WSJ reported on using AI at scale to produce content:

Journalist Nick Lichtenberg produced more stories in six months than any of his colleagues at Fortune delivered in a year.

One Wednesday in February, he cranked out seven.

“I’m a bit of a freak,” Lichtenberg said.

While many journalists hit the phones and cultivate source relationships, when news breaks Lichtenberg often uploads press releases or analyst notes into AI tools and prompts them to spit out articles that he can edit and publish quickly.

[…]

It can be challenging for midsize, legacy publications like Fortune to find relevance in a media era that values deep investigative reporting or viral punditry. Lichtenberg’s work helps Fortune scale the quantity of its output. Fortune said AI-assisted stories have helped drive subscribers.

Whereas another angle could be discerned in an article by Wired, focusing on AI’s role to replace the usual scaffolding of editors and fact checkers that institutions provide:

Heath is part of a growing contingent of tech reporters using AI to help write and edit their stories. The AI workflow is especially enticing for reporters who have gone independent, losing valuable resources like editors and fact-checkers that typically come with a traditional newsroom. Rather than just prompting ChatGPT to write stories, independent journalists say they are re-creating these resources with AI.

[…]

After speaking publicly about her use of Claude, Sun received criticism from people who were offended by the notion that AI could replace a human editor. Critics argued that AI can’t transform your ideas or challenge you as much as a human. Sun says she found the comments confusing. Most Substackers can’t afford to hire a human editor, so by adding Claude and instructing it to challenge her, Sun argues it’s made her process more rigorous.

Both stories map neatly onto some recent debates in the legal sphere. Lichtenberg is the associate who discovered that ChatGPT can draft ten memos before lunch; Sun is the solo practitioner who finally has something resembling a partner to push back on her drafts. And as in the legal sphere, there was a distinct backlash against the idea of bringing AI into it.

Now, I already suggested that a key consideration in AI writing is whether a text is expected to be (i) produced or (ii) read by humans (which entails the existence of text produced and read processed by robots). But it’s worth going further and, as some would say, solve for the equilibrium.

And in that context, it appears clear to me that a large majority of text will increasingly be expected to be produced by AI, even if read by humans. Oh, certainly, for some of that text AI-production will be merely tolerated, or we’ll be satisfied with a clean fiction of a human holding the pen - this would not be the first such fiction. But I can see humans five years from now shrugging at the mention that a given piece of text, except in very narrow domains,2 has been AI-produced: most things will be by that time.

What’s holding that back, beyond the jagged frontier of AI writing, is the remaining stigma placed on it: witness all the uses of Pangram or ZeroGPT to cast any piece of text on social-media as AI-generated, or the cancellation of novels or games suspected of having relied on genAI. Yet, given the quality and convenience of using AI, I don’t see any equilibrium where that stigma does not dissipate eventually, at least amongst a majority of people.3

But if that’s the future, then the attempts to slow it down risk turning into rearguards combats, and may even backfire. I am thinking, for instance, of the mandates by certain courts to disclose the use of AI: in the current era of stigma, this is only incentivising lying and shadow uses; in an era where most text is AI-generated anyway, this will be useless. And I am confident we will eventually move from one era to the other.

In all this, there is a certain irony for lawyers. A profession that has long prided itself on the craft of writing may find that the question was never really about quality. It was about authorship, and the authority we attach to a human name at the bottom. Some of that authority can be captured by, or assigned to AI. Journalists are discovering this first, and trying to manage the distinction between different types of texts;4 lawyers will follow, probably with some lag and even greater fighting. But the destination is likely the same.

Lost in translation

But this focus on writing text might also age poorly, if AI’s most consequential outputs turn out not to be texts at all - or at least, not texts meant for us.

Over at Asimov Press, Matthew Carter reports on the new ways to advance scientific knowledge with AI:

I call this the “legibility problem,” the risk that AI-generated scientific knowledge becomes incompatible with human understanding, and think it will define the next era of science. The knowledge AI systems generate may be expressed in concepts that do not map onto our own, communicated in ways optimized for other AIs rather than for human investigators.

[…]

If AI science does achieve superhuman performance, and if AI systems begin forming their own research communities around concepts that mutate faster than we can track, then the work of human scientists will shift from that of creation to that of excavation.

Reading this, one may be forgiven for thinking that the legibility problem is not new: we already have areas where institutions produce outputs through unobservable process and on the basis of illegible considerations. I am thinking about judging and decision-making: psychology teaches us that their roots are rarely the pure legal syllogism we expect, and it’s a common, if not universal, experience for lawyers to be delighted by a win even though they would have never expected the way an adjudicator got there.

This indeterminacy of the human mind has always made me dubious of the calls for “transparency” in this domain when it comes to algorithmic decision-making.5 As Carter says of AI-led science, “if we truly want AI scientists to make breakthroughs, some loss of legibility may be inevitable”.

But if we take the parallel with judging further, we can see some ways how legibility can be reintroduced or reimposed over the human mind’s mysteries. Legal systems everywhere have adopted two key approaches: formalism (or “procedure”), and “reason giving”.

The first is a question of observability: it enhances legitimacy and expectation management when a process runs through its expected course; it can also corral decisions and outcomes into a certain way. It strikes me that computer science and data analysis has developed its own version of this intuition under the label of ‘observability’ - the discipline of making complex systems legible not by understanding their internals but by instrumenting their behaviour from the outside.

But the second is even more important: we expect adjudicators to provide reasons, and while not always appreciative of (or sharing) these reasons, rarely second-guess that they appropriately reflect the actual reasoning of the decision-maker. The text is sufficient in itself, regardless of whether it represents post hoc reasoning or window-dressing: we already accept legal reasons as formally adequate even when we suspect they are not causally accurate.

And if that is the standard, then there is no principled reason it could not extend to AI, provided we build the procedural scaffolding (observability) and insist on adequate reason-giving. The question is whether we want to - a question about authority, not about legibility.

It’s a bird, it’s a plane, it’s an AI-first law firm !

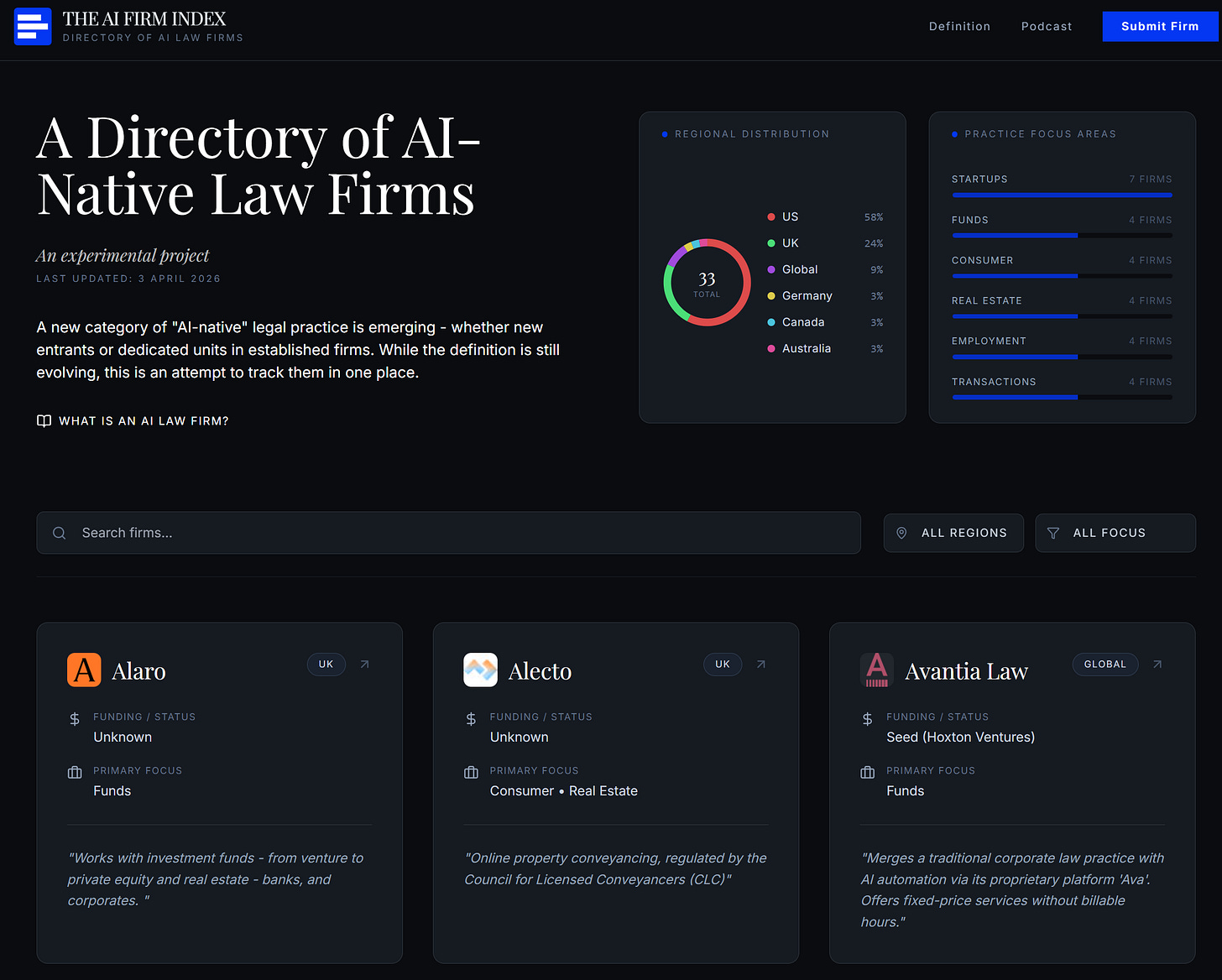

Every few weeks now, we hear about the launching of a new “AI-first” law firm or practice. It’s becoming common, and you know what happens when lawyers see something becoming common ? They build lists and databases of this, with this one showcasing (as of writing) 32 “AI-Native Law Firms”.

Now, the issue with databases is often in the definition of the inputs, and there are question marks about what qualifies as an “AI-first” law firm. I am not the only one to wonder: over at the cursed social network (no, not the one you are thinking about), Rok Ledinsky asks the same question, and notes that some recent candidates look more like tech companies that happen to be working on legal matters than “law firms”. At the same time, he adds, some lawyers are redesigning their entire process around AI assistance - should they be labelled “AI-first” too ?

Of course, one tension inherent in this label is that it is trying to drive a wedge “AI-first” law firms and traditional models, but that wedge cannot be about AI itself - nearly every law firm or practice I know professes to embrace AI, if not in practice, at least in words. There is an undeniable marketing aspect behind this herding effect, but it matches the expectations of most actors in the legal chain of value: clients, institutions, regulators are eyeing AI as the one thing going on, and being out of that conversation is not an option.

Hence, probably, the focus on AI being “first” or “native” by these new outfits: it’s trying to one-up the basic “we now use AI” to highlight that AI is front and center of their work. But in doing so, they promise a different model than law firms (I note that ArtificialLawyer calls them “NewMods”), one that assumes that legal work can be handled with an “AI-first” approach, that there are jobs, tasks, and market demand for legal work performed primarily by or through AI tools. And that a business model can be built out of this.

While this is not an undue assumption, I think the key here should be to distinguish between existing and new tasks that can be captured by that approach.

The former have the advantage, well, of existing. But one limit to this is that traditional law firms will be unlikely to stay still and see their work being captured by more efficient AI-led tools. I go around telling everyone that the market is sticky and lawyers conservative, but I’d also wager that they can readily compete away any advantage of an smaller outfit that just uses the same tools available to anyone else.

At some point, Big Law is Big Law for a reason (relationships, reputation, resources, etc.), and once the marketing value of “AI” goes down, I am not sure introducing yourself as “Native” will help tremendously. In other words, if AI-first law firms assume that “traditional models” will remain out there to provide a foil to their distinctiveness, I doubt this bet will pay off.

More promising is the servicing of new legal tasks and demand that only an “AI-first” model can serve. But that requires identifying this new demand first: focusing on market creation, task redefinition, or service redesign - much, much harder tasks. And if the current roster of AI-first outfits cannot do that, then they are just temporarily over-signalling a capability that will diffuse.

Only last week, one law faculty I work with was pondering reintroducing literature classes to get students a feel of good writing, while I listened to a lawyer and a judge debate how long it takes for a junior to write production-ready prose (and they counted it in years).

Such as, hum, blogs and author-led newsletters.

I’d also expect a class-based discrepancy in attitudes, similar to the existing snobbery over industrial products.

I was about to press send, when I saw this story of a group of magazines being faulted by Belgium’s journalism ethical board for publishing AI-written stories without proper transparency about it.

That and the under-discussed point that transparency encourages “gaming”, while obscurity also has its benefits.

What methodology do users of legal AI use to catch hallucinations before they wreak havoc?

Hi Damien! I hope you're doing well. I think you're opinion on AI-Native Law Firms is spot-on. This is something that I've been tracking as well. There is no definition of an AI-Native Law Firm. AI being embedded as part of core workflows is vague since you don't know what constitutes "core". Furthermore, there's an entire unresolved debated on the billable vs fixed-price model. The definition is something that we'll find out with time. BigLaw will be able to out compete AI-Native Law Firms because clients chose BigLaw because of reputation, brand image and trust with over years of track records closing transactions.

One of the areas in India where I see AI-Native Law Firms capturing value could be MSMEs because BigLaw has ignored businesses like export businesses, textiles, fisheries etc. These businesses have been traditionally underrepresented. However, with AI tools more value can be captured as these businesses do not have intensive legal work. Firms can make recurring revenue as these businesses tend to be stickier to law firms (loyalty) as they grow. AI-Native Law Firms could extend legal services to a set of the population that did not have access to legal services. Would love to get your thoughts on this! (It has the potential to do so)

I write a blog in substack titled "The LegalTech Thesis" where I analyze all things LegalTech. One of my posts analyzes how AI-driven due diligence tools are transforming M&A workflows. Would love to get your thoughts on this as well!

https://harshithviswanath.substack.com/p/ai-driven-legal-due-diligence-the