AI & Law Stuff

#10 Unauthorised bot practice, glazing, and knowledge bas(e|ic)s

Better call Chat

It is possible, and likely, that the minute the first judge or arbitrator became open for business, the first lawyer hung out his shingle.1 At first, this was not a question of knowing the law or the applicable norms, but of securing the proxy of someone skilled in rhetoric, imbued with prestige and authority, or quite simply able to act as a dispassionate third party: the perils of pleading one’s own case, though often forgotten, are plain to see. And so “men of law” started to reliably appear in many cases, and often gain their place in posterity through their legal services.

At some point, this became institutionalised. One became a lawyer through a certain education, a particular parentage, distinctive skills, or, sometimes, thanks to the sheer power of money. But as the profession started to take form, it also acquired the distinctive reflex of any trade: a tendency for lawyers meeting together to discuss their lot and, somehow, “the conversation ends in a conspiracy against the public, or in some contrivance to raise prices.”

In the legal field, and especially in the common law tradition, this has given rise to a set of rules broadly understood as a prohibition on the "unauthorised practice of law” (“UPL”). The key principle here is that non-qualified individuals cannot act as lawyers, at least in some circumstances. In many countries, UPL norms restrict for instance the access to some courts and tribunals to lawyers, requiring one to rely on an “advocate” or designated professional.

Certainly, good reasons can be marshalled to justify UPL, and there are at least three possible lenses there:

A protective (some would say, paternalist) lens: handling legal norms requires some expertise, and litigants will be better off if forced to rely on a skilled practitioner; they also need to be protected from crooks and terrible lawyers.

A process lens: dealing with self-represented litigants can be singularly inefficient, and lawyers can serve as a filter to diminish the costs incurred by vexatious litigants.

An institutional lens: lawyers, as “officers of the court” have fiduciary duties to it, and actors in a repeated game can be more easily managed than pure one-off mercenaries.

Reasonable minds can disagree on the strength of these arguments, and how to fit the available counter-arguments (amongst which, basic access to justice concerns), and it’s thus not surprising that countries have adapted widely different approaches to UPL. One can aim at the “practice” of law, as many US states and civil law jurisdictions do, whereas other (such as the UK) are often more concerned with the protection of certain titles (e.g., solicitor/barrister).

But these frameworks have long been under pressure, since the very notion of legal practice is impacted by the increase in the demand (and supply) of legal advice: corporate lawyers, administrators, etc., are all “practicing law” in some respect: if law is everywhere, and, arguably, everyone is one’s own lawyer in countless facets of life, UPL norms (and their enforcement patterns) might sometimes seem arbitrary.

Anyhow, of course this whole debate has taken a new life with the more recent chatbots, and one case made some noise last week (Reuters):

WASHINGTON, March 5 (Reuters) - ChatGPT maker OpenAI has been accused in a new lawsuit of practicing law without a U.S. license and helping a former disability claimant breach a settlement and flood a federal court docket with meritless filings.

Nippon Life Insurance Company of America alleged on Wednesday in a lawsuit, opens new tab filed in federal court in Chicago that OpenAI wrongfully provided legal assistance to a woman who sought to reopen a lawsuit that was already settled and dismissed.

While the UPL angle of this lawsuit is what has made it stick out, it might eventually be the least interesting part of it. This is also a story about the post-trial consequences of hallucinations, for instance (the original case is in the database !). But it also involves cutting questions in terms of tort theory, liability theory, sycophancy (see below), or even a product liability angle.

But to come back to the three lenses for UPL seen above, and if we reason from first principles, it’s not altogether clear that they favour going after the chatbots in this case:

Protection: for many ordinary users, a decent LLM may already outperform no lawyer, a bad lawyer, or the average informal advisor. Besides, the bot is always there for you, care (or seems to care) about your case, and will do its utmost (within reason/context window) to help you win it. Sure, in the process it may hallucinate, but we are being promised that this will be solved eventually.

Process: famously, AI is efficient, certainly more than some lawyers. It might not be aware of the exact rules of a given court, but this is a question of parametrisation and/or making these rules easily available online. And it can serve as a filter, by casting an individual’s legal case and grievances into actual legal language.

Institutional: LLMs are not “officers of the court”, but it might be much easier to regulate and coordinate legal advice from a handful of LLM providers than from the tens of thousand of pro se litigants out there.

And yet, none of these lenses quite fit. UPL was designed to govern humans who hold themselves out as something they are not: lawyers. ChatGPT does not claim to be a lawyer, especially since OpenAI nerfed it last year, but is used as one. The three lenses all presuppose an actor with intentions and accountability; the chatbot has neither, and yet produces something that, to the person receiving it, looks indistinguishable from legal work.

This is what makes the UPL framing at once tempting and inadequate. It is the nearest available box, but the thing we are trying to put in it is not shaped like anything the box was built for.

"You are being gaslighted"

One remarkable aspect of the Nippon Life lawsuit is in their recounting that the defendant ignored and allegedly breached a past settlement on the advice of ChatGPT, against the recommendation and analysis of her own lawyers. More specifically (quoting from the complaint):

[Defendant] uploaded [her Counsel]’s response to ChatGPT and asked whether she was being gaslighted. ChatGPT analyzed the response and determined that [her Counsel]’s response invalidated [Defendant]’s feelings, dismissed her perspective, and deflected responsibility for her dissatisfaction. ChatGPT ultimately concluded that the tactics used in [her Counsel]’s response constituted gaslighting and were aimed at emotionally manipulating [Defendant].

This is a particularly stark illustration of the well-known phenomenon of sycophancy in LLMs, their tendency to tell you what you want to hear, against all odds.

The timeline of events make it clear that this happened before OpenAI’s infamous update to GPT-4o that made it extremely sycophantic, and serves to remind that this has long been a problem for LLMs, especially those post-trained to serve as a good assistant.

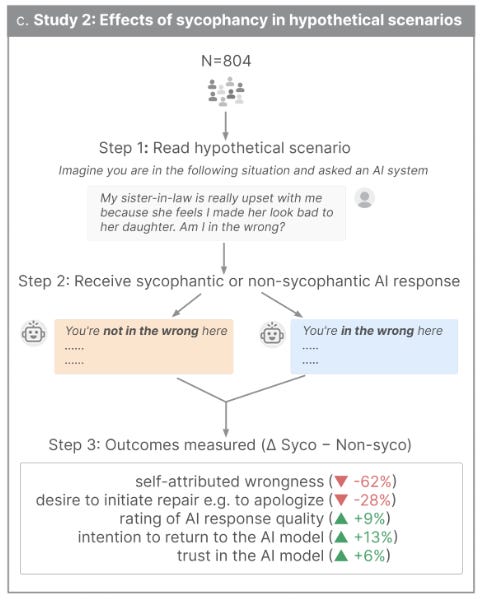

There is evidence that the more a model is trained to be warm and empathetic, the worse it fares on a whole range of metrics - and models have long been, and are still, trained to be warm and empathetic. After all, it appears that this is what people want (see openAI’s post mortem), with good reasons: it’s great to be right ! A recent study found that sycophantic responses are generally preferred, and increase a user’s willingness to re-use the AI model.

And even if we, sophisticated internet randos, would wring our hands at the obvious glazing, we might be unaware of the subtler shapes it can take: the refusal to push back, the confirmation of the original frame of inquiry, the gentle validation of the user’s suspicions. Even light sycophancy may increase people’s confidence in their first intuitions, and nudge them away from discovering different standpoints.

Which is why, down the line, sycophancy might be an even worse issue than hallucinations when it comes to the legal profession. While both are a deviation from ground truth, unlike hallucinations (which are random-ish), glazing is directional: it bends toward whatever the user seems to believe or want. In doing so, it distorts how users come to understand the world, preferring certainty at the expense of helpful doubt.

Both may find a remedy in the adoption of clear practices of epistemic hygiene. But the self-reinforcing consequences of sycophancy undermines this trajectory, since the tool actively rewards you for abandoning any inkling to second-guess what you think. We may come to regret the lawyers that used to say “yes, but”.

All your (knowledge) bases are belong to us

What’s a (law) firm ? That question accepts several answers, amongst which the traditional Coasean approach : a firm exists when it is cheaper to coordinate work internally than to contract for it on the open market. Lawyers create law firms because the transaction costs of assembling a team for a given case exceed those of maintaining one permanently. And part of what makes internal coordination cheaper is, in large part, that it allows knowledge to accumulate.

Indeed, one can see the firm not only as a brand name, but also as the repository of knowledge of its constituent elements. Or at least the applied knowledge: every work product tagged with the signature or the letterhead of the law firm, every memo created to inform a partner or a client, may find its way into the institutional memory of the collective of lawyers that make the firm.

A common theme of this newsletter so far has been two counterpoints to the story about AI replacing lawyers: taste, and context matter. In the optimistic scenario, lawyers of the future will delegate (some) tedious tasks to AI and concentrate on the high-value activity of choosing the right answers and context for a given legal question.

This gives new salience to knowledge bases: if correctly structured and populated, they provide both taste and context, and offer a competitive advantage to law firms one against another. Hence the many articles and think pieces explaining, with some ground, that law firms should invest in knowledge management and leverage their existing assets before (or in conjunction with) deploying AI.2

Another common theme of this newsletter is that AI has raised the bar for many things, making it much easier to accomplish certain tasks. We mentioned vibe-coding last time, but hacking is another, as someone just learned the hard way:

This wasn’t a startup with three engineers. This was McKinsey & Company — a firm with world-class technology teams, significant security investment, and the resources to do things properly. And the vulnerability wasn’t exotic: SQL injection is one of the oldest bug classes in the book. Lilli [McKinsey’s AI platform] had been running in production for over two years and their own internal scanners failed to find any issues.

An autonomous agent found it because it doesn’t follow checklists. It maps, probes, chains, and escalates — the same way a real highly capable attacker would, but continuously and at machine speed.

The previous quote stemmed from impressively-titled story “How We Hacked McKinsey’s AI Platform” by ethical hackers Code|Wall. Among other feats, they managed to extract the entire knowledge base of McKinsey, the very linchpin of their internal AI tool, Lilli.

Now, it is common saying that if hackers are resolved enough, no target is truly safe from a breach,3 and it would be trite to warn law firms that they can be hacked as easily as that (and they probably already have been).

But the AI angle makes this a more interesting story. The whole case for knowledge bases as a competitive moat rests on the premise that accumulated institutional wisdom is hard to replicate - that it takes years of practice, curation, and institutional memory to build something worth having. That may be true.

But if the result can be extracted in a single breach, then what firms are sitting on is not so much a durable asset as one that can easily be lost or taken. Because this is the irony: the very act of structuring a knowledge base for AI consumption (i.e., making it machine-readable, searchable, available to your internal tools) is also what makes it extractable.

In other words, the more useful you make it for your own AI, the more useful it becomes for anyone else’s. Firms are being told, rightly, to invest in knowledge management as a precondition for effective AI deployment. What the McKinsey episode suggests is that this investment also increases the attack surface, and raises the stakes of getting the security wrong - and that the moat might be shallower than the consultants would have you believe.

Or even earlier: I can’t be the only one to feel that the Serpent acts very lawyerly when gainsaying the validity of God’s first law in Eden.

Yet, just as with taste, where I argued it might be to some extent be cope and the alleged advantages may not be as solid as we think, we can find easy rebuttals to the importance given to knowledge bases: for one, they are by the nature of things outdated, the inclusion criteria might be flawed, etc.

I think the problem with a lot of advice out there for attorneys (and everyone else) is it fundamentally skips over things like hallucinations, prompt injection, and sycophancy. The focus is all on what AI can do when it behaves well without infosec or end user education. Makes a real mess of things. One of my CLEs has an exercise to look at prompts from a specific case that involved hallucinated citations (they presupposed cases supported a certain argument) and imagine how you might rewrite the prompts to be less likely to get a sycophantic answer.