AI & Law Stuff

#7 - HR grievances, "taste", and a Twitter beef

Flooding the (HR) Zone

In any activity or setting where humans gather, interpersonal issues are bound to arise. Dave has spoken wrongly to Jane, François has gulped Francine’s lunch, or Alice has unfairly taken credit for Bob’s work. Many of these conflicts can likely be settled by some form of direct communication from one party to the other, but others can’t, and you may not always want to confront, face-to-face, whoever wronged you.

These interpersonal problems are even harder to solve when hierarchy is taken into account, as happens in most corporations. Grievances can arise every time the scaffolding of authority (clear roles, mutually-acceptable boundaries, etc.) that allows taking and giving orders is allegedly breached, and the hierarchy aspect makes it worse in terms of resolution: sure, speaking with the manager could help, but what if the manager is the wrong party ?

The traditional answer to this kind of conundrum has been to task a third party with the resolution of such grievances, a third party meant to, precisely, “recognize no face in judgment”.1 This is not only relying on the consensual triad of dispute resolution, which is the basic structure of all adjudication, but also offers a formal way of resolving grievances: most often third-party resolution will use a process and a medium - i.e., text - designed to soothe passions and dispense with the interpersonal communications between the parties in disputes.

In the context of a corporation, that third-party role has long been delegated, at least in part, to the Human Resources (“HR”) department: if you have a grievance, typically you would have to take it to HR, which will set in motion a process to realign interests, passions, and reasons in a way that hopefully satisfies everyone - or to escalate it to other dispute-resolution systems (and the legal department) in grave or important cases.

Can that solve all interpersonal problems ? No, not entirely, and by design. As legal philosophers and political scientists have noted for years, adjudication - of whatever form - cannot deal with every kind of problems and grievances, as some are too far removed from the ideal-type of a legal dispute: two well-delineated, conflicting interests that lend themselves to a right/wrong dichotomy. And so, in every company, the grievance resolution process will require some effort of you: in terms of putting your grievance in terms HR can understand, often with a substantial personal input of meeting a HR person, some kind of mediation, etc.

Of course, you see where I am going here: all this is friction, a friction that forces you to decide whether you are actually upset enough to go through the trouble. The long reports you have to fill in, the endless meetings with HR, serve both an informational purpose (they need to know what you are annoyed about), and operate as a bottleneck: expressing your grievance in a way that is intelligible to HR might prove hard, or you might realise that you don’t have a case here.

But now there is Artificial Intelligence (“AI”). The Financial Times reports:

Anna Bond, legal director in the employment team at Lewis Silkin, used to receive grievances that were typically the length of an email. Now, the complaints she sees can run to about 30 pages and span a wide range of historical issues, many of which are repeated.

[…]

Ministry of Justice figures showed new employment tribunal cases brought by individuals increased by 33 per cent in the three months to September, while concluded cases decreased by 10 per cent, compared with a year earlier. The government expects cases to increase, due to the new Employment Rights Act.

But AI is not simply used to expand the volume of text and inflate some (otherwise unworthy) grievances: its role is in fact even subtler, in convincing people that some of these grievances are worth taking up to begin with:

Louise Rudd, an adviser to workplace mediation service Acas, says employees can draw unrealistic expectations about the strength of their claims, and “in some cases, it appears that AI has provided incorrect or misleading information” including non-existent precedents. In others, individuals may ask for advice and will be given non-existent case law or incorrect interpretations by AI, which they may try to use to support their position against their employer.

Formal processes were meant to cool tempers by forcing people to slow down. AI does the opposite.

In past newsletters I have suggested that the issue with slop flooding the system will require introducing new forms of frictions: where text is too cheap to meter, you may want non-textual media to take a greater importance. Still from the Financial Times report:

To really get ahead of slop grievances, however, employers should intervene in problems before employees start considering an AI complaint. “Line managers should consider speaking with the employee, ideally face to face, as soon as possible, to understand the core complaints in the employee’s own words rather than responding point by point to the lengthy arguments put forward in writing,” Casey says.

[…]

Face-to-face conversations can set the scene for a satisfactory resolution for everyone. “This part of the process is often overlooked and many employees jump straight to a formal process. It may be something that employers want to encourage or promote more within their businesses.”

Which would put us back at square one: solving interpersonal grievances through personal interactions, the very limitation that a formal process before a third-party was meant to deal with. There should be some sweet spot somewhere between “write it yourself” and “have a face-to-face meeting with the person you are complaining about”, but it remains to be invented.

The taste of AI

If you follow the discourse about “AI and jobs”, a discourse that - as I sought to convey last week - is much more interesting and varied than the basic “AI will take over all jobs” position, one argument in particular has acquired particular salience: that AI and automation will be kept at bay because of a feature of human judgment that cannot be replicated, however impressive LLMs become.

Some put it as a matter of “judgment”; others as a question of “taste”; the more knowledgeable have insisted on the term phronesis (or φρόνησις, for the very cultured);2 and I could put in terms of “discernment”. All these terms point to the same key insight, that there is much to the practice of law (or of many other activities, professional or not) that cannot be reduced to words and instructions, and thus cannot easily be automated. Under that view, LLMs, statistical machines, are fundamentally unable to grasp that elusive quality of human beings.

Another way to put it is that humans and professionals are not mere cogs in a process, they are individuals that have acquired, through years of practice and experience, a feeling for the texture of their activity, a capacity to distinguish between what matters and what does not, what is right and what is not. This characteristic, the argument goes, cannot be distilled in tokens that would orient an AI meant to take over the job. As put by the Pope in Antiqua et Nova, machines may be able to choose, but they cannot decide.

(As Chris Clark rightly points out in a piece well-worth reading, this entire insight also echoes Holmes’ famous statement that “[t]he life of the law has not been logic: it has been experience”.)

This points to something true, but for the sake of debate (and while this deserves an entire piece in itself), I’d like to point out a few limitations to this view.

First of all, one should also beware of arguments that flatter the self - as notions of taste and judgment obviously do. These are characteristics that everyone would assign to oneself and thus offer no particular information;3 and few would contend that they are not bringing something special, some kind of “judgment”, to their job. But that might not be true: not everyone can have confidence in one’s taste like Rick Rubin. In fact, sometimes, the human judgment at stake may not be optimal - mechanistic outcomes might be preferrable, and AI could offer an excuse to dispense with the “human touch” in places and industries where it has historically led to worse results.

Second, a lot of things that register prima facie as taste might denote other things (e.g., pattern-matching from long experience, internalised conventions of a field, or simple risk aversion), and nothing may prevent LLMs to be equipped with the tools to identify or use a proxy for such things. Many tasks were previously though unfeasible for AI, only to be proven wrong with the latest advances. Some instances of what we currently consider “judgment” might fall into that category.

Third, if taste varies across professionals, then AI may simply turbocharge those who have more of it. The threat, then, would not come from AI itself, but from the best professionals made vastly more productive by it. This is a scenario where AI does not level the field; it makes it steeper, thanks to “taste”.

So expect to hear more about judgment and taste as the last redoubt against automation. But while the argument has appeal, it may not be entirely valid, and it may tell us more about what professionals want to believe than about what AI cannot do.

Frontiers and laggards

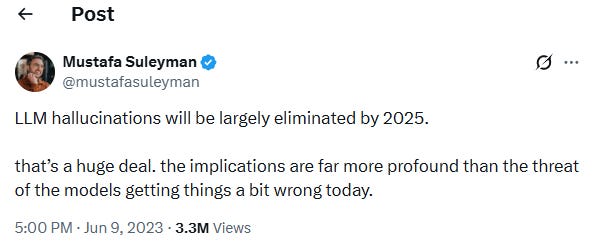

In the debate we have already discussed between AI hype-mongers and AI skeptics, one argument in particular keeps popping up: hallucinations. Consider this 2023 tweet by MicrosoftAI’s CEO:

This tweet was recently excavated and bandied around on X, creating a new debate around the problem of hallucinations, with people stating that from a consumer perspective (and taking into account various grounding tools), hallucinations are not a big deal anymore. Others were not as sure.

It was in this context that a debate erupted about hallucinations in the legal sphere, with one lawyer, prinz, venturing that:

First, hallucinations are no longer a problem. Consistent with the prediction you quoted from 2023, GPT-5.x almost never hallucinates. And overall, the percentage of inaccurate responses I get from GPT-5.2 Pro is lower than the percentage of inaccurate responses I would get from a competent junior associate (yes, fully accounting for hallucinations).

Second, people wildly overestimate the difficulty of most tasks performed by lawyers. […]

Put in another way, I feel that the biggest barrier to widespread adoption of AI by lawyers today is connectivity, interfaces, harnesses - *not* intelligence of the best models, and certainly not hallucinations.

To this, Gary Marcus, noted AI skeptic, replied citing and screenshotting the Hallucination database, pointing out that “lawyers keep getting busted for fake cases in their briefs […] Pretty much every day, at much a higher clip than two years ago”.

This elicited this answer from prinz:

The cases you see in this database are instances of lawyers who *did not check AI's work* - and THAT is the problem. Without AI, these lawyers would not have checked the junior associate's work instead. There would not be any hallucinations as a result, but the judge would throw out the lawyer's argument as having been poorly constructed and researched. A fail case either way.

As a side note, my guess is that most of the hallucinated case law in this database was probably the product not of enterprise-grade LLMs that I use (GPT-5.2 Pro), but rather of something like the free tier of ChatGPT. Non-reasoning models are useless in actual professional work, including because they do hallucinate *much* more frequently than GPT-5.2 Pro. This should come as no surprise.

Since I have been cited and used as an argument in this debate, I felt I had to make a few points clear - and notably confirm prinz’s assessment of what’s going on in the database. Most often, and as far as the data indicates, we are indeed dealing with lawyers or pro se parties using older/inefficient models, and many lawyers who have been sanctioned - as I have argued elsewhere - had other issues, including a general disregard for the quality of their submissions. prinz is also right that this is, at bottom, a supervision problem - and that one needs to check their sources, AI or no AI.

But more fundamentally, this debate arose because the people involved were describing two different populations: there is a group of sophisticated users for whom hallucinations are not much of an issue, or at least not a greater issue than what LLMs have replaced. But there are also people that lack this level of sophistication and will copy and paste anything a model spits out - prompt included. The problem is that courts do not get to choose which population walks through their doors; and a legal system calibrated to the best possible use of AI will spend a lot of time dealing with the worst.

Or to put it another way, two key propositions -

AI has limits and will create issues in the legal system, be it only because of uneven deployment and model lags; and

AI is genuinely helpful for lawyers in countless ways.

… are not mutually exclusive ; in fact, I think that they are both true at the same time. I derive tremendous value from AI so far, because (I think) I know how to use it well. But this is a journey, and not everyone is there yet.

Deut. 1:17 (R. Alter translation).

For what it’s worth, Google searches for the term phronesis have seen a recent modest uptick.

One of my long-standing pet peeves is people describing themselves as “open-minded”, or a “critical thinker”, even though I doubt anyone would take the other side of that description.